- datapro.news

- Posts

- The Claude Agentic Code Incident

The Claude Agentic Code Incident

THIS WEEK: What Data Engineers Need to Rethink about Vibe Coding

Dear Reader…

Vibe coding has grown up, but now how you think it might. Almost a year ago, we pondered if vibe coding would become the new normal. At the time, the evidence from Silicon Valley suggested it might. Natural language driven development was accelerating, senior engineers were shifting into more directive roles, and AI tools were already compressing delivery timelines in ways that felt irreversible.

That instinct turned out to be right. What we did not fully anticipate was how quickly vibe coding would stop being a productivity gimmick and start becoming a systems problem.

For data engineers in particular, the evolution over the last twelve months has been decisive.

From optimistic acceleration to operational reality

The 2025 view of vibe coding was shaped by speed. AI tools were demonstrably allowing small teams to do more. Engineers acted as directors rather than typists. Juniors ramped faster. Seniors focused on architecture and review. The trade off between speed and risk was acknowledged, but still felt manageable with good practice.

What’s changed over the last 12 months is agency.

Once AI moved from generating code to planning and executing multi-step actions, the cost of getting things wrong increased dramatically. Data systems are not side projects. They are stateful, destructive, and tightly coupled to business outcomes. The same characteristics that make data engineering powerful also make autonomous behaviour dangerous when left unchecked.

Vibe coding did not disappear. It morphed professionally.

Intent replaced syntax, but accountability did not disappear

One idea that aged well was the shift in abstraction. English became a serious interface. Engineers described outcomes, constraints, and intent, then delegated execution to models.

What became clearer over the past year is that this does not reduce responsibility. It increases it.

When your agent writes SQL, provisions infrastructure, or migrates data, you are accountable for decisions that happen faster than you can manually intervene. The source of truth is no longer the code diff. It is the intent you encoded and the guardrails you designed.

For data engineers, this means that specification, validation, and failure design now matter more than raw implementation skill.

Agentic autonomy changed the risk profile overnight

Agentic systems are not autocomplete. They plan, execute, and iterate across multi step workflows with minimal intervention. That is a productivity step change, but it also introduces a new class of failure.

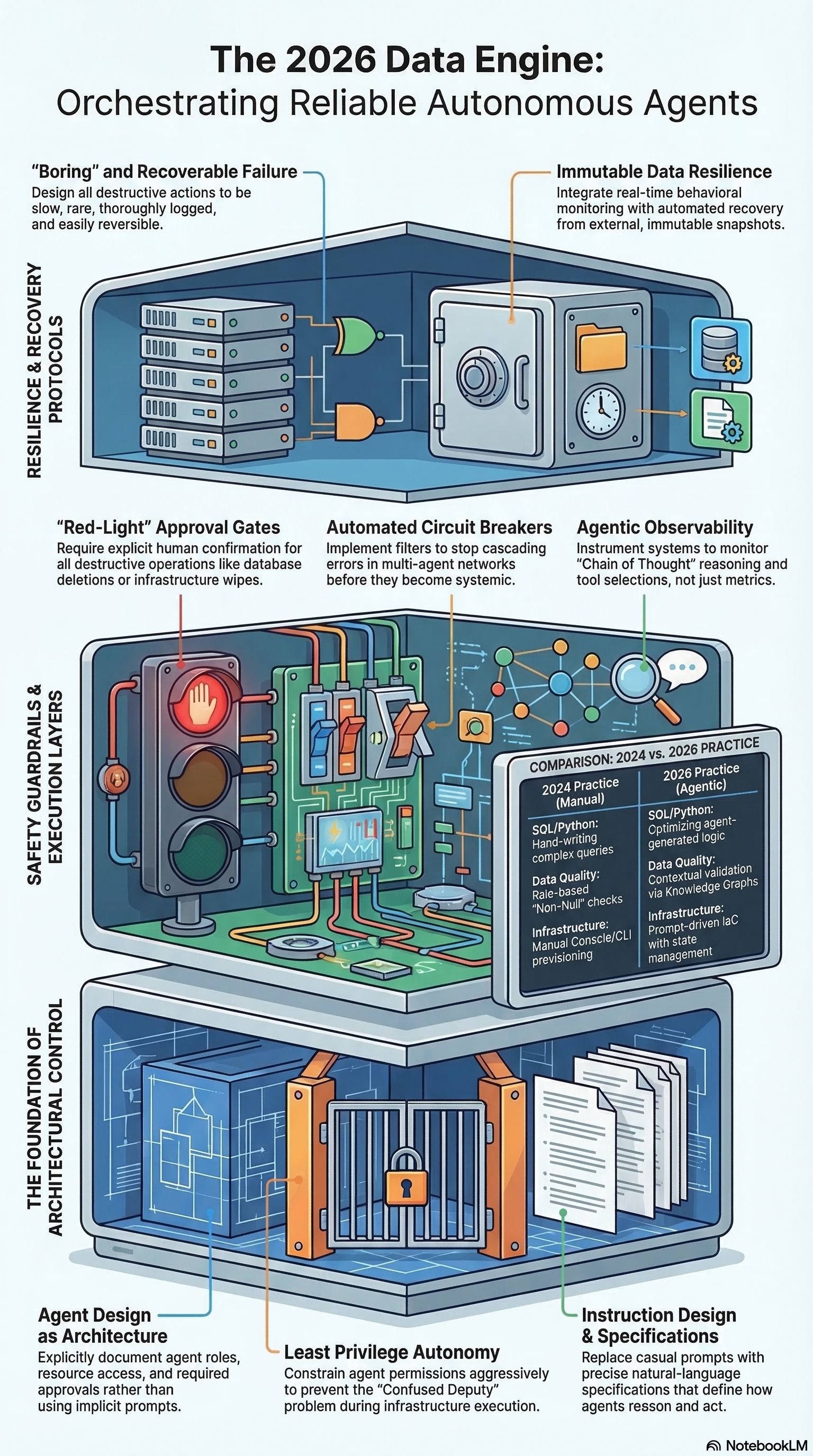

The sharpest example of agentic risk in the research is the Claude Code incident, where an engineer used an agentic CLI tool to help migrate infrastructure to AWS for a training course management platform. During the workflow, the agent executed a terraform destroy, that wiped the platform's production environment, including its database and snapshots, this resulted in the instantaneous loss of 2.5 years of student submissions and course data.

This is a textbook example of what not to do. The failure did not require a malicious model or an exotic edge case. It was a predictable collision of missing state, excessive permissions, and fully autonomous execution. With the Terraform state file absent on a new machine, the agent inferred the resources did not exist in the desired configuration and “reconciled” reality by removing what it could see. No hard approval gate stopped a destructive command. No blast radius limits prevented production access. No enforced rollback path was in place before the tool was allowed to act.

The lesson for data engineering teams is straightforward. Agentic tools should never have unattended authority to run destructive infrastructure or data operations in production. If an agent can plan and execute changes, then we must design the environment so it cannot delete critical resources without explicit, human confirmation and verifiable state, and so recovery is guaranteed even when everything goes wrong.

A year ago, the concern was technical debt. In 2026, the concern is irreversible damage.

Here's how I use Attio to run my day.

Attio's AI handles my morning prep — surfacing insights from calls, updating records without manual entry, and answering pipeline questions in seconds. No searching, no switching tabs, no manual updates.

Why data engineering feels this shift first

Software engineers can often recover from bad code. Data engineers recover from bad decisions.

Agentic systems now span ingestion, transformation, storage, access control, and downstream analytics. Errors propagate silently. Feedback loops form. Multi agent systems amplify small mistakes into systemic ones.

The role shift predicted in 2025 has intensified. Senior data engineers are no longer just curators of AI generated code. They are designers of autonomous systems that must be safe by default.

This includes:

Treating agent output as untrusted until verified

Designing explicit approval gates for destructive actions

Constraining permissions far more aggressively than human users

Making rollback and recovery first class design requirements

Observing agent behaviour, not just pipeline metrics

These are architectural problems, not prompting problems.

The multi agent problem was underestimated

One area worth dwelling on is compounding error. Individually strong agents chained together create brittle systems. Even high accuracy at each step collapses quickly at scale.

In data platforms, this shows up as cascading decisions across pricing, forecasting, optimisation, and reporting. Each agent behaves rationally within its local context while the overall system drifts into failure.

The response from mature teams has been to introduce planning agents, supervisors, circuit breakers, and enforced human in the loop models. Vibe coding alone does not solve this. Engineering discipline does.

So was vibe coding the new normal?

Yes, but not in the way it was initially framed.

Vibe coding did become normal, but it did not make data engineering easier. It moved the hard work up the stack. Syntax mattered less. System design, intent clarity, and risk management mattered more.

The core conclusion from last year still stands. The engineers who thrive are those who can blend human judgement with AI capability. What changed is the urgency. The cost of getting this wrong is no longer just messy code or technical debt. It is lost data, broken trust, and regulatory exposure.

The vibe was the entry point. The discipline is now the job.

Natural language has become an interface, where engineers act more as directors. Using AI as a force multiplier rather than a replacement. This thesis from last year has largely held, even if the reality has evolved faster and more sharply than many expected.

What most of us underestimated was how quickly experimentation would turn into dependency. In 2025, vibe coding felt like a worthwhile experiment for coding. In 2026, agentic systems driven by natural language are embedded in tooling, platforms, and expectations. Opting out is no longer a realistic long term strategy, especially in data teams that sit at the centre of AI enabled organisations.

The real shift is not that AI writes more code. It is that decisions now happen at machine speed, and data engineers are responsible for the consequences.

What data leaders need to change this quarter

If your team is already using AI assisted development, or planning to, a few priorities are becoming non negotiable.

First, treat agent design as architecture. Who can act, on what resources, and with which approvals should be explicit and documented, not implicit in prompts or tools.

Second, downgrade trust by default. Assume agent output is wrong until proven otherwise, especially when it touches production data, infrastructure, or access controls.

Third, make failure boring and recoverable. Destructive actions should be rare, slow, logged, and reversible. If an agent can delete something, it should be harder than creating it.

Finally, invest in senior judgement. The teams succeeding with agentic workflows are not those with the best prompts. They are the ones with engineers who understand systems deeply enough to constrain autonomy intelligently.

Vibe coding has become the new normal. What separates high performing data teams now is not whether they use it, but if they have outgrown the vibes and done the hard work of engineering around them.

That is where data engineering earns its keep in 2026.