- datapro.news

- Posts

- The Token Reckoning: Your AI Bill Is About to Hurt

The Token Reckoning: Your AI Bill Is About to Hurt

THIS WEEK: Every major AI vendor is pulling the subsidy rug from under your pipelines at once, and most engineering teams won't notice until the invoice arrives.

Dear Reader…

The cheap AI era is not ending with a press release. It is ending with a contract renewal, and for some organisations, it is ending with a bill they never saw coming.

Deloitte's Q4 2025 analysis found that multi-agent deployments had driven some large clients to "tens of millions" in monthly inference bills, with no relationship to the flat-rate assumptions baked into original business cases. Notion disclosed that AI infrastructure costs were consuming approximately 10 percent of profit margins. A technology startup documented a 46,000 percent billing increase over 48 hours after an agentic workflow entered a context loop. A compromised API key at another firm generated an $82,314 invoice in a single session. In every case, the root cause was the same: consumption-based billing, no instrumented ceiling, and no one specifically owning AI spend.

These are not edge cases. They are the leading edge of a structural shift that every major frontier provider is now accelerating. OpenAI has signalled publicly that unlimited plans cannot survive contact with actual usage economics. Google cut Gemini API free tier quotas by between 50 and 80 percent in late 2025 and introduced enforced billing caps in April 2026. Microsoft removed free Copilot Chat access for organisations above 2,000 seats that same month. And Anthropic, the company whose models underpin more production data pipelines than any other frontier provider, has executed the most comprehensive restructure of all - moving enterprise customers from flat-rate subscriptions to consumption-based billing, whilst simultaneously removing volume discounts.

This week, we examine exactly what has changed across the vendor landscape, why it was inevitable, and what your organisation needs to do before the repricing finds you unprepared.

The Subsidy Is Being Withdrawn

For most of Anthropic's commercial life, the pricing story was simple. Pay a subscription, get Claude. That model is now officially closed to new customers and will cease to exist for existing ones at their next contract renewal.

The new enterprise structure replaces previous seat tiers with two role-based products. Claude Code is priced at $20 per user per month for technical staff; Claude.ai is priced at $10 per user per month for business users. Those seat charges now cover platform access only. Usage is billed separately at standard API rates based on actual token consumption.

There is more. Customers must accept a monthly spending commitment based on Anthropic's estimate of their token use, and pay that amount whether usage reaches the estimate or not. The changes also eliminate API volume discounts of between 10 and 15 percent that previously applied to larger enterprise customers.

Read that last point again. Volume discounts have been removed at the same time as usage-based billing has been introduced. The cost structure moved in both directions simultaneously, and neither direction favoured the customer.

This Is Not a Pricing Decision. It Is a Capacity Rationing Decision.

Context matters here. Blackwell GPU rental prices climbed 48 percent in two months. CoreWeave raised prices more than 20 percent late last year. Bank of America expects compute demand to outstrip supply through 2029.

In late March 2026, Anthropic quietly tightened session limits for Pro and Max users during peak hours. Claude Code's prompt-cache time-to-live shifted from one hour back to five minutes in early March, materially increasing quota burn for long coding sessions.

These are not the decisions of a company repricing for profit. They are the decisions of a company managing a genuine resource constraint. One analyst put it plainly: "It is very possible that Anthropic is acquiring customers faster than it can scale capacity, and the unit economics simply do not work at the old prices."

Anthropic's CEO Dario Amodei has acknowledged the underlying tension himself. Data centres take one to two years to build, meaning companies are committing billions now for demand they cannot yet verify. "If you're off by a couple of years, that can be ruinous," he said. Anthropic has clearly written down that spreadsheet. What it found there is driving the restructure we are seeing now.

[Webinar] Stop babysitting your coding agents

MCPs give your agents access to information, not understanding. The teams pulling ahead are using a context engine to give agents the right context for every task, so they stay on track without the set up tax or the correction loops. Join live on May 6 (FREE) to see how.

Four Risks Your Organisation Is Not Yet Pricing In

1. Your pipeline cost models are already wrong

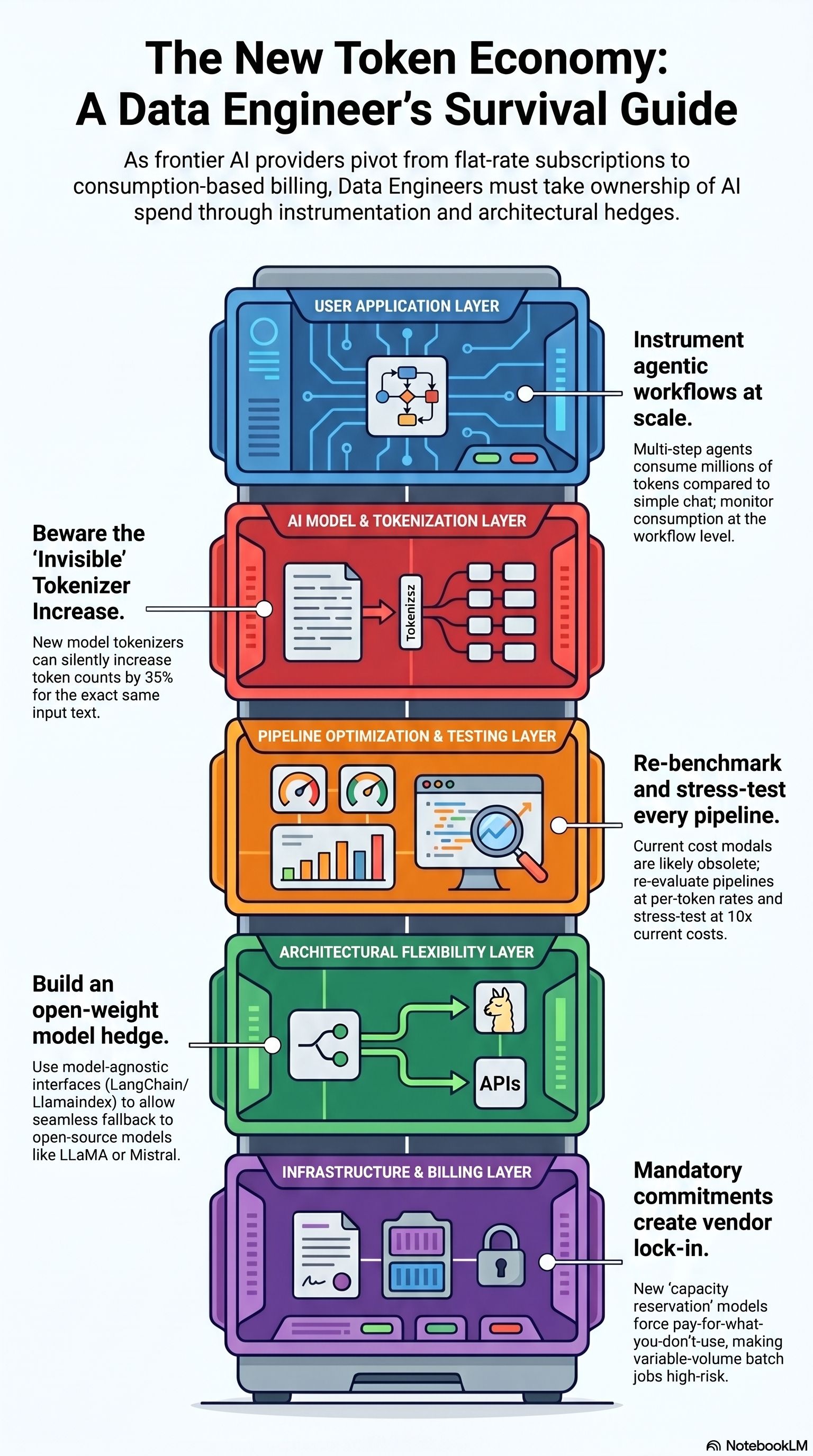

Any data pipeline with an LLM enrichment step (entity extraction, classification, summarisation, document parsing) that was costed using pre-2026 pricing or flat-rate subscription economics is operating on a model that no longer reflects reality. Re-benchmark every AI-dependent pipeline against current per-token rates today, then stress-test at three times and ten times those rates as a planning assumption. That is not pessimism. It is data stewardship applied to your own cost infrastructure.

2. Agentic workloads are disproportionately exposed

The token economics of agentic workflows are categorically different from those of conversational use. A multi-step data quality agent that iterates across a document corpus, calls tools, writes to memory, and self-corrects is not consuming hundreds of tokens per session. It is consuming millions. At current rates, one million input tokens costs $5 and one million output tokens costs $25. The flat-rate economics that made those workloads viable have been identified and closed by Anthropic. Data engineers building orchestration layers for pipeline automation or anomaly detection must instrument token consumption at the workflow level, not just the API call level.

3. The spending commitment is a new form of vendor lock-in

The mandatory monthly spending commitment deserves scrutiny that most procurement teams have not yet given it. This is no longer a utility model. It is a capacity reservation model: Commit to a floor, pay overages above it. Teams that depend on variable-volume workloads (batch jobs that spike at month-end, seasonal data processing, event-driven enrichment pipelines) are particularly exposed. Committing to a floor based on peak estimates means overpaying in troughs. Committing based on averages means overage charges at peaks.

4. The new tokeniser is an invisible cost increase

The latest Anthropic models use a new tokeniser that may consume up to 35 percent more tokens for the same fixed text. A pipeline migrated to a newer model for performance reasons will silently consume significantly more tokens for identical input, without any change in application logic and without any explicit price increase announcement. Any cost benchmarking done on older model versions is invalidated. Run tokeniser comparison tests on representative samples of your production data before migrating to any newer model version.

The Open-Weight Hedge

This is not an argument for abandoning frontier model access. It is an argument that no production pipeline should have only a frontier model access path.

Meta's LLaMA family, Mistral, and Qwen are not theoretical alternatives. They are production-grade models available today, with no per-token pricing, no vendor lock-in, and no exposure to the repricing events described above. For classification, entity extraction, structured data generation, and document summarisation at pipeline scale, they are already competitive with frontier models across a wide range of benchmarks. The data engineer who has invested in infrastructure to run open-weight models, whether on-premise, on reserved cloud instances, or through inference providers such as Together, Fireworks, or Groq, holds a genuine hedge against the commercial risks that are emerging.

What You Should Be Doing Right Now

Instrument your consumption. Does your organisation have per-pipeline, per-workflow token visibility today? If not, you are flying blind into a metered billing environment.

Re-cost every AI-dependent workload. Benchmark at current per-token rates. Stress-test at three and ten times. Account for the tokeniser change when migrating to newer model versions.

Review your contract terms. Know whether your current Anthropic agreement is a legacy flat-rate plan or usage-based. Know when it renews and what spending commitment you are being asked to accept.

Abstract your model dependencies. Are your LLM API calls behind a model-agnostic interface (LangChain, LlamaIndex, or a custom router) that would allow you to switch providers without rewriting application logic?

Assign FinOps ownership. Who in your organisation owns AI spend governance? If the answer is unclear, the transition to usage-based billing will find that gap before you do.

Closing Thought

Anthropic's pricing pivot is not evidence that the AI industry is stable and maturing. It is evidence that the companies inside the industry know the subsidy is unsustainable, and are repositioning their cost structures before they have to absorb it themselves.

The bill shock stories above are not anomalies from careless teams. They are the predictable result of building production AI workloads under a pricing model that the vendors themselves knew could not last.

What is now visible across every major provider is a coordinated, if unannounced, withdrawal of the subsidies that made early AI adoption so commercially painless. The direction of travel is consistent and, at this point, irreversible. The question for data engineering teams is not whether repricing will affect them. It is whether they will be ready when it does.

The organisations that instrument token consumption at the workflow level, stress-test their cost models, and establish clear FinOps ownership now will find the transition manageable. Those that continue to treat AI API access as a cheap background utility, governed by nobody in particular, will discover the new economics the same way the examples above did: suddenly, and at scale.

Token economics are now a core engineering discipline. The vendors have made that decision on your behalf. The only remaining question is whether your organisation has made it too.